Queue backlogs slow down your system by increasing request wait times and creating bottlenecks, even when CPU utilization isn’t yet at 100%. These growing queues tie up resources, cause delays, and reduce overall performance, often leading to failures before your hardware hits full capacity. If you keep exploring, you’ll discover how strategies like load balancing and caching help prevent these issues early on and keep your system responsive under heavy loads.

Key Takeaways

- Queue backlogs increase request latency, causing slow response times even when CPU utilization is moderate.

- Long queues create bottlenecks that hinder resource efficiency before CPU reaches full capacity.

- Excessive queues indicate underlying issues, leading to degraded performance despite healthy CPU usage.

- Delays from backlogs can trigger performance failures prior to CPU saturation.

- Monitoring queues helps detect and address performance issues early, preventing system overload.

Have you ever wondered how queue backlogs impact overall performance? When your system starts accumulating long queues, it’s often a sign that something is amiss, even if your CPU isn’t hitting 100 percent utilization. Queue backlogs can create bottlenecks that slow down processes, increase latency, and degrade user experience. This happens because the backlog causes requests to wait longer before processing, leading to inefficient resource utilization. Instead of focusing solely on CPU capacity, you need to look at how your infrastructure handles incoming requests and whether it can process them swiftly enough to prevent queues from building up.

Queue backlogs slow processes and degrade user experience, even if CPU utilization seems normal.

One key factor in managing queue backlogs is implementing effective load balancing. By distributing incoming requests evenly across multiple servers or processes, load balancing prevents any single point from becoming overwhelmed. When load balancing is properly configured, it helps guarantee that no individual server becomes a bottleneck, thereby reducing the risk of backlogs forming. This way, you can handle spikes in traffic without sacrificing performance. Proper load balancing also enables your system to adapt dynamically, redirecting requests to underutilized servers and maintaining smooth throughput even under heavy loads. Additionally, understanding system scalability can help you design your infrastructure to handle growth more effectively.

Another essential strategy to mitigate queue backlogs involves adopting distributed caching. Distributed caching temporarily stores frequently accessed data closer to the user or processing units, which drastically cuts down the time it takes to retrieve data. When data retrieval is quicker, each request spends less time waiting in queues, and overall throughput improves. This technique also reduces the load on backend databases or services, further preventing bottlenecks. Distributed caching works hand in hand with load balancing—while load balancing spreads out the requests, caching reduces the processing time per request, collectively preventing queues from growing too large. Incorporating projector technology can optimize visual content delivery in multimedia systems that support your infrastructure. Furthermore, adopting monitoring tools can provide real-time insights that help you identify potential bottlenecks before they escalate.

However, even with load balancing and distributed caching in place, it’s indispensable to monitor your system closely. If queues start to grow, it’s a clear sign that your infrastructure isn’t keeping pace with demand. You might need to scale horizontally by adding more servers or optimize your caching strategies further. Avoid neglecting these signals; waiting until CPU utilization hits 100 percent isn’t the only way performance can falter. Queue backlogs can cause delays and failures well before that point, fundamentally killing performance in ways that aren’t immediately obvious. Recognizing system performance metrics early allows for proactive adjustments before issues become critical.

In the end, preventing queue backlogs requires a proactive approach that combines load balancing, distributed caching, and continuous monitoring. By addressing these elements, you guarantee your system stays responsive, scales efficiently, and maintains peak performance—even when traffic surges or unexpected demands hit.

QWORK Spring Balancer 2 Pack 1.1–3.3 lbs Load Range – Adjustable Retractable Tool Hanger for Assembly, Workshop & Garage

SOLID METAL BODY:** Iron case with steel wire helps support handheld tools securely for repetitive use.

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Frequently Asked Questions

How Do Queue Backlogs Differ From CPU Utilization?

Queue backlogs differ from CPU utilization because they highlight delays caused by inefficient scheduling or memory bottlenecks, not just CPU workload. When queues grow, it indicates tasks are waiting longer, which can slow down performance even if CPU usage isn’t maxed out. You need to address these backlogs to improve efficiency, as they often stem from memory issues or scheduling problems that CPU utilization alone doesn’t reveal.

What Hardware Factors Influence Queue Backlog Formation?

Imagine your system’s heartbeat faltering—that’s what hardware factors like memory bottlenecks and I/O contention cause, leading to queue backlogs. Limited memory slows data access, while I/O contention causes delays in disk or network operations. These bottlenecks choke data flow, causing queues to swell even before your CPU hits full capacity. Addressing these hardware issues keeps your system flowing smoothly, preventing performance crashes rooted in unseen hardware struggles.

Can Software Optimizations Reduce Queue Backlogs Effectively?

Yes, software enhancements can effectively reduce queue backlogs. By improving software efficiency and implementing optimization strategies—like refining algorithms, reducing lock contention, and balancing workloads—you can minimize delays. These adjustments enable your system to process requests more smoothly, preventing queue buildup before CPU usage nears critical levels. Consistently reviewing and tuning your software ensures peak performance, keeping backlogs in check and enhancing overall system responsiveness.

How Do Network Delays Impact Queue Backlogs?

Ever wonder how network delays impact queue backlogs? When network congestion occurs, latency effects increase, causing data packets to arrive slowly or get delayed. This buildup leads to queue backlogs, which can hinder performance even before CPU utilization hits 100%. You might notice sluggish response times or dropped connections, as the system struggles to process delayed data efficiently, highlighting how crucial network health is for maintaining ideal system performance.

Are There Specific Workloads More Prone to Creating Backlogs?

Certain workloads, especially those with high resource contention and unpredictable workload patterns, are more prone to creating backlogs. You’ll notice that tasks requiring extensive disk I/O, network access, or locking shared resources tend to accumulate queues quickly. These workloads often struggle to clear queues efficiently, leading to performance bottlenecks even before CPU utilization reaches critical levels. Managing resource contention and understanding workload patterns helps you prevent these backlogs from impacting your system.

Latency: Reduce delay in software systems

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Conclusion

Understanding queue backlogs is like spotting a traffic jam before the highway reaches full capacity—you realize the slowdown isn’t just about a overloaded CPU, but the buildup of tasks waiting in line. By managing queues effectively, you prevent bottlenecks from choking your system’s performance. Remember, it’s not always about pushing harder, but about smoothing the flow. Keep the queues clear, and your system will run like a well-oiled machine, even before hitting that 100% mark.

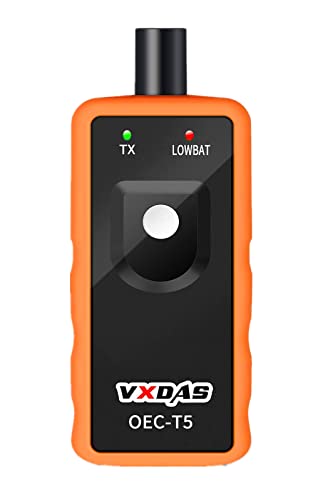

VXDAS TPMS Relearn Tool Only for GM Vehicles (2006-2024 Chevy/Buick/GMC/Opel/Cadillac) Original Sensor with 315/433 MHz, Tire Sensors Pressure Monitor System Reset Tool OEC-T5-2025 Edition

🚗2023 UPDATED FOR WIDER COMPATIBILITY: Specifically designed to work with most GM vehicles 2006-2023 (Chevy / Buick /…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

network request queue management

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.